Product surface

EasyReader

Productized Android UX backed by local model integration instead of remote summarization shortcuts.

Offline-first Android reading software with local summaries, persistent state, and a product surface people can live in.

Built as a reader people can return to, with discovery, persistence, local file ingestion, and reading-state management.

Uses llmedge for on-device summaries so user text stays local and the AI feature remains inside the product workflow.

Emphasizes Android product craft as much as model integration.

Kotlin / Jetpack Compose / Room / Hilt / llmedge

Product scope

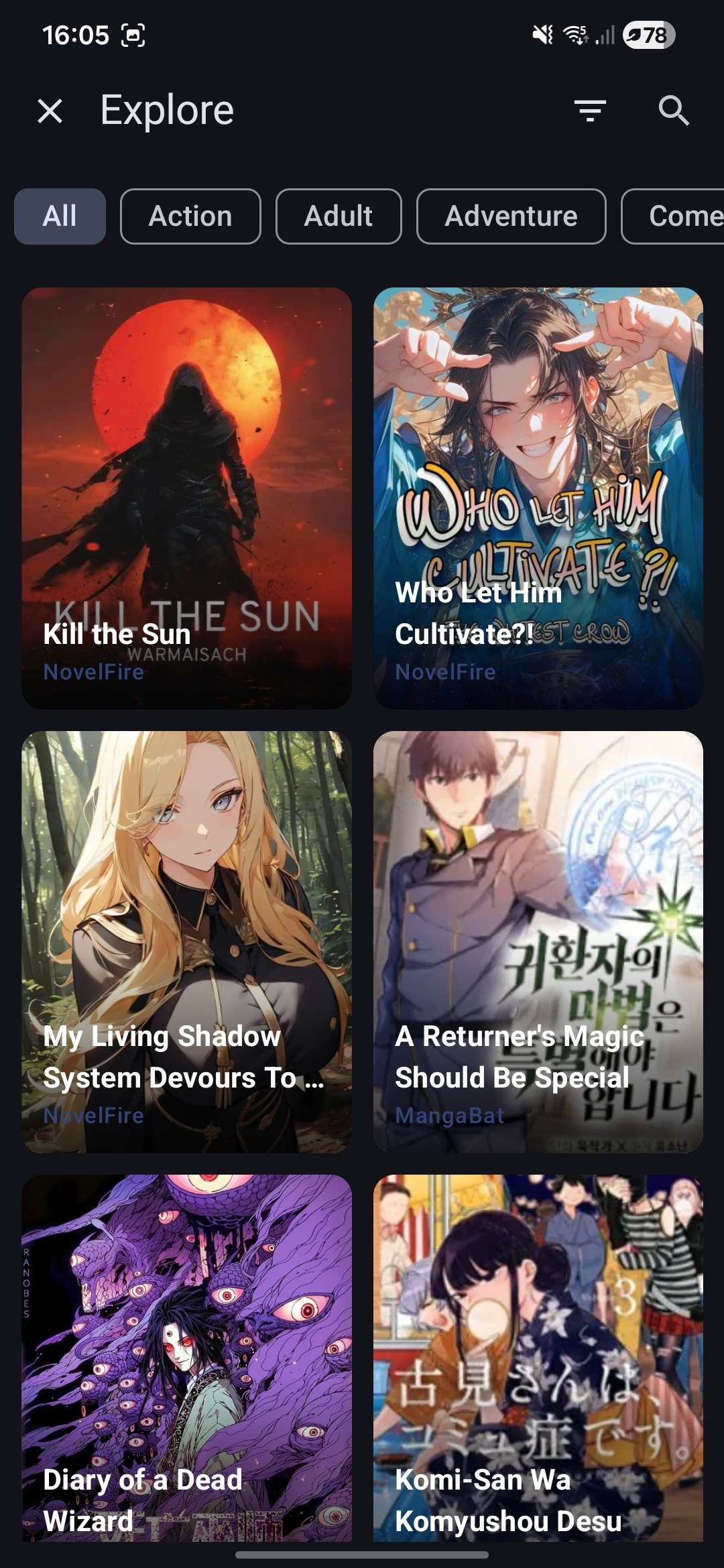

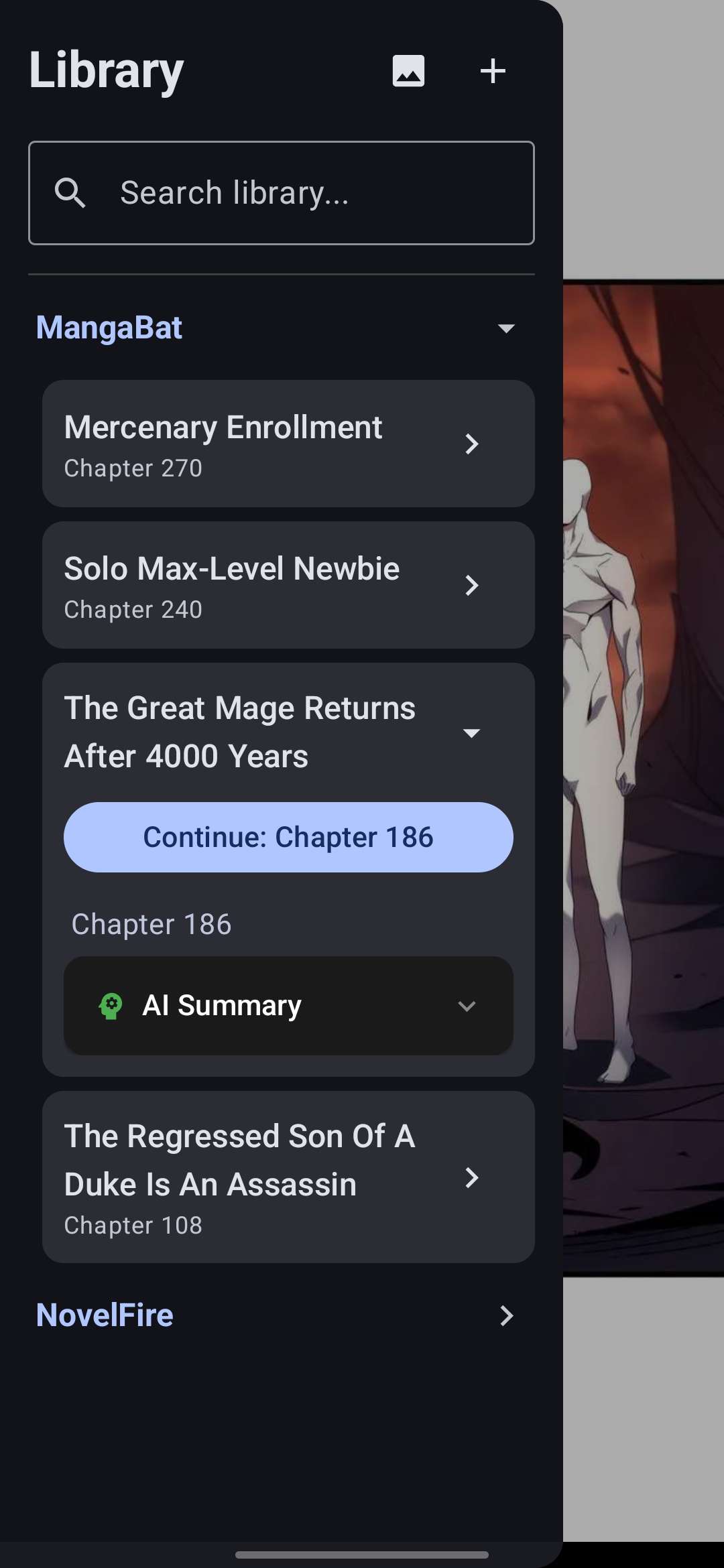

Discovery, library, reader, and summary workflow

Reading inputs

Web chapters, PDF, EPUB, and local HTML

AI mode

On-device chapter summaries through llmedge

Product focus

Offline-first Android UX

Workflow framing

A daily-use reading product, not a thin wrapper around summarization.

I built EasyReader around browsing, persistence, reader ergonomics, and multi-format ingestion first. Local AI matters here because it supports the product flow instead of trying to replace the app’s core job.

MainActivity coordinates reader, library, explore, deep links, and local file selection.

ContentRepository normalizes remote chapters, PDF, EPUB, and HTML into one reading pipeline.

SummaryService uses llmedge to fetch a local model and summarize chapters without sending user text away.

Compose, Room, and Hilt hold the application together as a durable Android product rather than a prototype shell.

Product signals

Shows product sense without abandoning local-systems discipline.

Bridges persistent state, ingestion, and on-device inference inside one mobile workflow.

Demonstrates that edge AI work can ship in software people actually use repeatedly.

Engineering notes

EasyReader is the clearest product-focused project in my portfolio. It uses local AI, but I do not want it presented as “an app with summaries.” The stronger angle is that it is a real Android reading product that happens to integrate on-device summarization well.

Product framing

The app’s core job is reading:

- discovering content

- organizing a library

- opening multiple input formats

- preserving reading state

- staying usable offline

That matters because the AI feature only works if the surrounding product is solid. The summary capability belongs inside a broader reading flow, not as a gimmick floating above the app.

Why the architecture is interesting

I structured the app around a clean product pipeline:

- ingestion is normalized through repository code

- the reading surface stays separate from acquisition and persistence concerns

- local summaries are delegated through

llmedgerather than baked directly into UI code - Room and Hilt support a real application lifecycle

This is different from my runtime-heavy projects, but it complements them. It proves I can turn infrastructure into a usable mobile product.

The AI story

The AI angle is still important, but it is intentionally scoped:

- chapter summaries run on device

- user text does not need to leave the phone

- the summary feature supports the reading experience rather than replacing it

That is a stronger signal than a generic “AI assistant” layer because it shows my product judgment about where local inference actually adds value.

Result

EasyReader shows end-to-end delivery: Android UX, ingestion, persistence, navigation, and local AI integration tied together into software I would present as a product, not a demo.